AI-generated content can seem like an easy win for businesses, especially when the promise is simple enough to sell internally: publish more crypto content, cover more keywords, use fewer resources, and pick up more organic traffic along the way.

On paper, it may sound cost-effective, and in some cases AI can absolutely help with research, structure and early drafting. But once that logic turns to pumping out large amounts of thin and repetitive pages, the whole strategy starts to backfire, and in the crypto space, it can become a bigger problem than some companies seem willing to admit.

The reason is pretty straightforward: A company might think they’re improving their search visibility, but if the pages they publish feel like generic fluff, the content stops looking like a serious effort to inform readers and starts looking like a cheap attempt to occupy the search results.

This ends up defeating the purpose of creating these pages in the first place, as no goal is achieved; it’s like you’re just throwing content on your website, without strategy and thinking that will get you results.

If readers don’t trust you, how will they convert or take action? And if your pages start to slide down the rankings, how will your platform, exchange or dapp be discovered?

When AI Slop Turns into Scaled Content Abuse

Google’s policy on scaled content abuse is pretty clear: the problem is creating and publishing lots of web pages mainly to manipulate search rankings while providing users with little or no value in return, and that standard applies regardless of how it’s created.

That’s worth pointing out, because many people still talk as if the real problem is the tool, when Google is actually focused on how the content is produced and why it’s being published in the first place.

So when a website starts pumping out massive amounts of unoriginal, low-value pages just to gain more search visibility, it’s moving right into the territory that Google says can lead to lower rankings or even removal from search results.

And this is where some crypto companies should probably be more honest with themselves. If AI is used to support a real editorial process where a writer or editor fact-checks, adds context, sharpens the argument, and makes sure the finished piece actually helps the reader, that’s one thing.

Google’s own guidance says that generative AI can be useful for research and structure, and it deserves to be part of the conversation. But when a company starts publishing fully generated articles with little or no editorial review because it wants to rank for more queries at a lower cost, it’s getting very close to the kind of scaled output Google warns about.

There’s also a real difference between using AI to aid the writing process and using it to dump content out at scale. Some publishers use AI for research, brainstorming, or outlining, then send the piece to a real writer or editor who fact-checks, adds unique reporting, sharpens the argument, and makes sure the article actually has something worth saying.

It’s the same old SEO playbook…with a faster machine

From that perspective, AI slop is really just the same old mass-page SEO book, with a faster machine behind it and a much lower cost to produce weak content.

That’s one of the reasons it’s getting worse. Once publishing multiple pages starts to feel cheap and easy, it becomes much easier to keep feeding the machine instead of stopping and asking what is actually worth publishing. And with Google’s March 2026 spam update recently rolled out across all languages, it’s clear the company is still working on how it handles web spam at scale.

That doesn’t mean all weak articles will be hit right away, but it does show that Google is still refining how it detects and handles spam behavior.

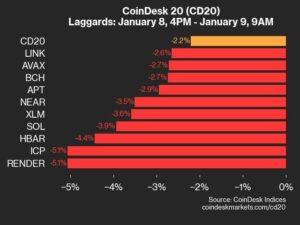

Some crypto companies are already using AI to publish large volumes of pages aimed mainly at attracting search traffic.

Sometimes it takes the form of comparison sites built around competitor terms and location-based keywords. In other cases, it appears on token pages, wallet guides, airdrop explanations, exchange reviews, educational content, or service pages that look like they were created to get clicks without providing any real value.

When you take a closer look at how these pages are made and how little they actually do for readers, it becomes much easier to understand the search risk.

According to Google’s Scaled Content Abuse Guidelines, crypto businesses that rely on this kind of low-value material should carefully consider whether these pages even belong in search. In many cases, setting them to “noindex” may be the safest move.

So crypto companies that treat mass AI output as a marketing shortcut are taking a real gamble in an environment where Google keeps updating enforcement in plain view.

There is a smarter way to use AI

There is still a smart way to use AI in publishing, and it starts with keeping the SEO strategy in place while using AI for support tasks where it can really save time. Research help, idea generation, sketching and early structuring all make sense, especially for crypto companies that want to move faster without lowering their standards.

Google explicitly says that these uses can be useful, and it gives crypto companies a sensible way to use AI, so let it accelerate the early foundation and then leave the reporting, writing, editing, verification and final judging to human hands.

That approach is safer for search, and it also leads to better content because people can usually tell when something has been properly thought out, carefully put together and written by someone who actually knows what they’re talking about. Especially in the crypto industry, where trust already needs to be earned more carefully, this difference weighs heavily.

The crypto companies that come out ahead will be the ones that use AI as a support tool in a proper editorial process because it gives them a better chance of creating work that people actually want to read, cite and return to.