- iOS and iPadOS users in the UK must now verify their age

- Otherwise, certain features may be disabled for under 18s

- Meta and Google have been fined for their child safety policies

It looks like we’re nearing a showdown when it comes to under-18 phone use: Apple is rolling out mandatory age verification for iPhone and iPad users in the UK, just a day after Meta and Google were hit with a massive fine in a landmark social media lawsuit.

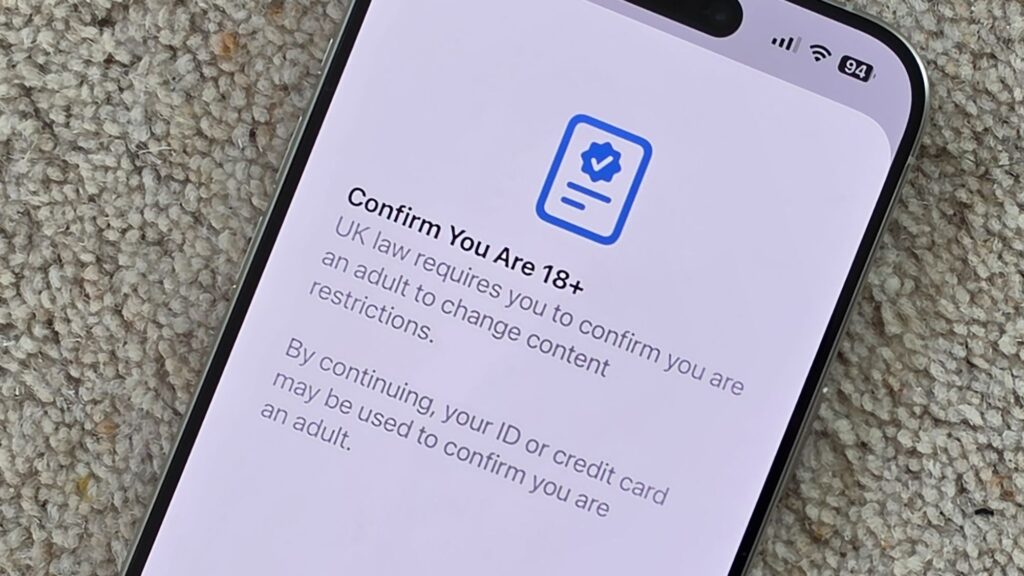

Starting with the Apple verification rollout, this is part of the new iOS 26.4 and iPadOS 26.4 update for UK users. If you’re a UK user, you’ll be asked to register a credit card or scan an ID to prove you’re 18 or over – unless Apple has previously verified your age.

Apple has all the details here, saying the verification process is “required by law in some countries and regions” to “download apps, change certain settings, or perform other actions with your Apple Account”. If you need to verify your account, you will see a message in the Settings menu.

The article continues below

Although this specific step of device-level age verification is not required by UK law as it currently stands, recent legislation means that it is required for adult websites (including pornographic websites). It has been up to the websites themselves to do the verification, but there have been calls for checks to be done at the unit level as well.

As the UK government tries to clamp down on social media for under 16s, a law similar to the one implemented in Australia now looks likely. Apple’s intentions may be to get ahead of any such decision, and according to the BBC it has been working closely with regulator Ofcom on the new feature.

It is not clear exactly what will happen if you are under 18 and unable to verify an adult identity. According to Apple’s support document, you may see certain features restricted or be asked to join a family sharing group run by a parent, but the wording suggests this will vary on a case-by-case basis.

Another reason Apple may have taken this step is the landmark social media lawsuit that just reached a conclusion in Los Angeles: Meta and Google have been ordered to pay $6 million.

The woman’s lawyers had described the apps developed by Meta and Google as “addiction machines” and argued that the tech companies had not done enough to prevent younger children from accessing these platforms, or to protect them from the harms associated with too much screen time.

In a separate lawsuit in New Mexico that reached a verdict earlier this week, Meta was separately ordered to pay a $375 million fine. Meta had been aware of child predators on its platforms and had not done enough to block them, the jury decided.

Meta and Google both intend to appeal: “Teen mental health is deeply complex and cannot be linked to a single app,” a Meta spokesperson said. “We will continue to vigorously defend ourselves as each case is different, and we remain confident in our record of protecting teenagers online.”

And while Apple’s age restrictions have been welcomed by Ofcom and child protection groups, not everyone is happy about it: some see it as another step towards “mass surveillance” and even more tracking and logging of user data, while others argue that the responsibility for protection should lie with parents rather than device manufacturers.

The momentum certainly seems to be in one direction right now – and with AI bots, another issue the internet is grappling with, it’s likely that more verification checks will start popping up in the future.

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews and opinions in your feeds. Be sure to click the Follow button!

And of course you can too follow TechRadar on TikTok for news, reviews, video unboxings, and get regular updates from us on WhatsApp also.