Adding eyes to artificial intelligence is always a difficult thing. Do you want it to see everything you are doing all the time? Certainly not, but I think most of us agree that an AI -Visuel Assist when you need it could come quite practical. Microsoft’s new Copilot Vision is possibly one of the most promising uses of AI-based visual abilities I’ve seen yet.

Microsoft revealed the Copilot Vision update to its Windows app and mobile apps (you can point your camera to things and vision can identify them for you) during a splashy, combined copilot and Microsoft 50 -year anniversary.

Copilot everyone but got a brain transplant using both the homework (Microsoft AI or Mai) and Openai GPT -Generative Models to provide updates across memory, search, personalization and vision functions.

Now that I’ve seen Copilot Vision in Action, I can tell you it’s one of the most exciting and important updates of the flock – even if it comes in two stages.

In the version you can access to your supporting Windows Desktop app right now, Copilot Vision can see the apps you run on the desktop. When you open Copilot – by selecting the icon or pressing your Copilot key on your keyboard – you can now select the new glasses icon.

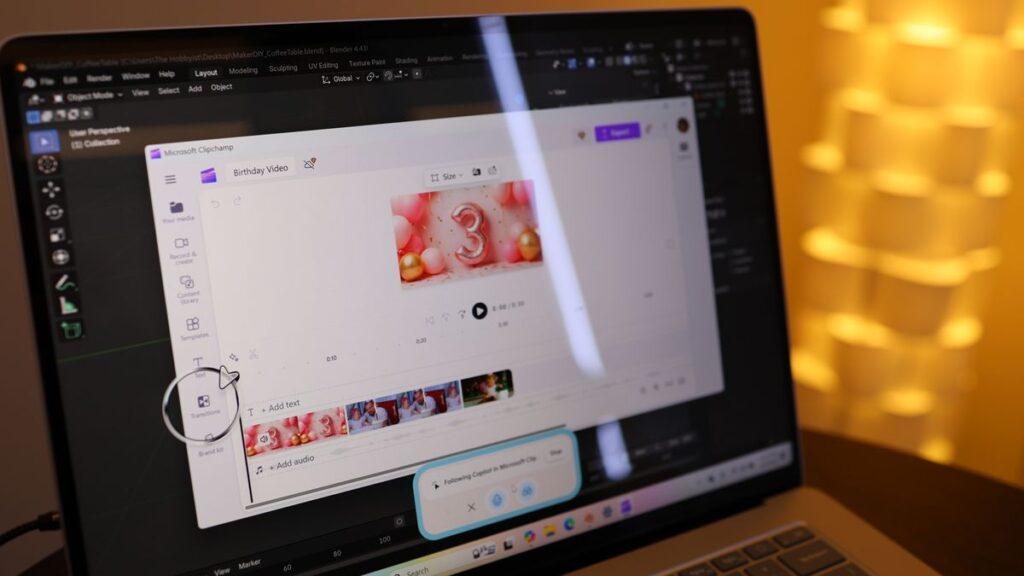

This allows you to see a list of open apps; In our case we had two races: Blender 3D and Clipchamp. This means that although Copilot is aware of the available apps running on Windows, it doesn’t look automatically.

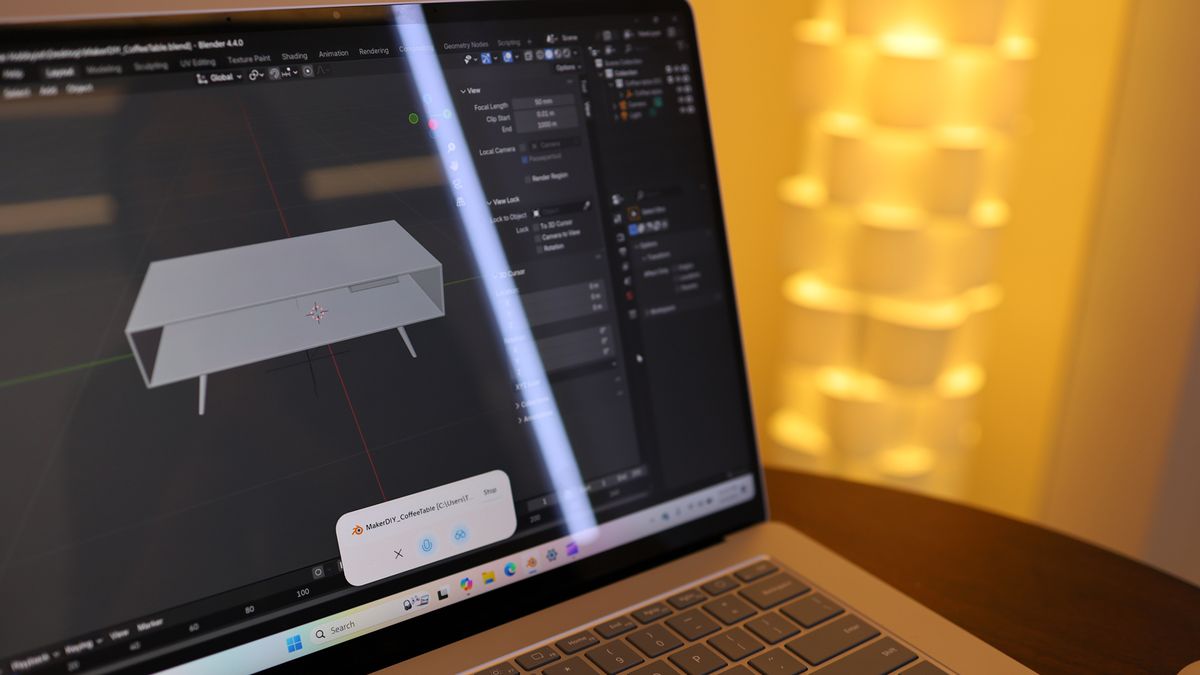

We chose Blender 3D, and from that moment in the future something changed in my Windows existence. I realized that Copilot can really see which app you are running, and instead of guessing with your intention, it answers based on the app and even the project you are working on.

A 3D -Sofabord project was open, and with the help of our voice we asked how to make the table design more traditionally. Our prompt contained almost no details of the app or project, but Copilot’s answer in a lovely baritone was fully contextual.

We then changed and asked how to make comments in the app. Copilot started answering, but we interrupted and asked where I could find the icon to add the annotations. Copilot adjusted quickly and immediately told us how we could find it.

This can prove to be extremely useful because you no longer break your power to jump out to search or even to overcome the app you are using or the project. Copilot vision looks and know.

However, let me tell you about what is going to come.

We followed the same steps to open copilot and access the vision component, but this time we pointed copilot to our Open Clipchamp project.

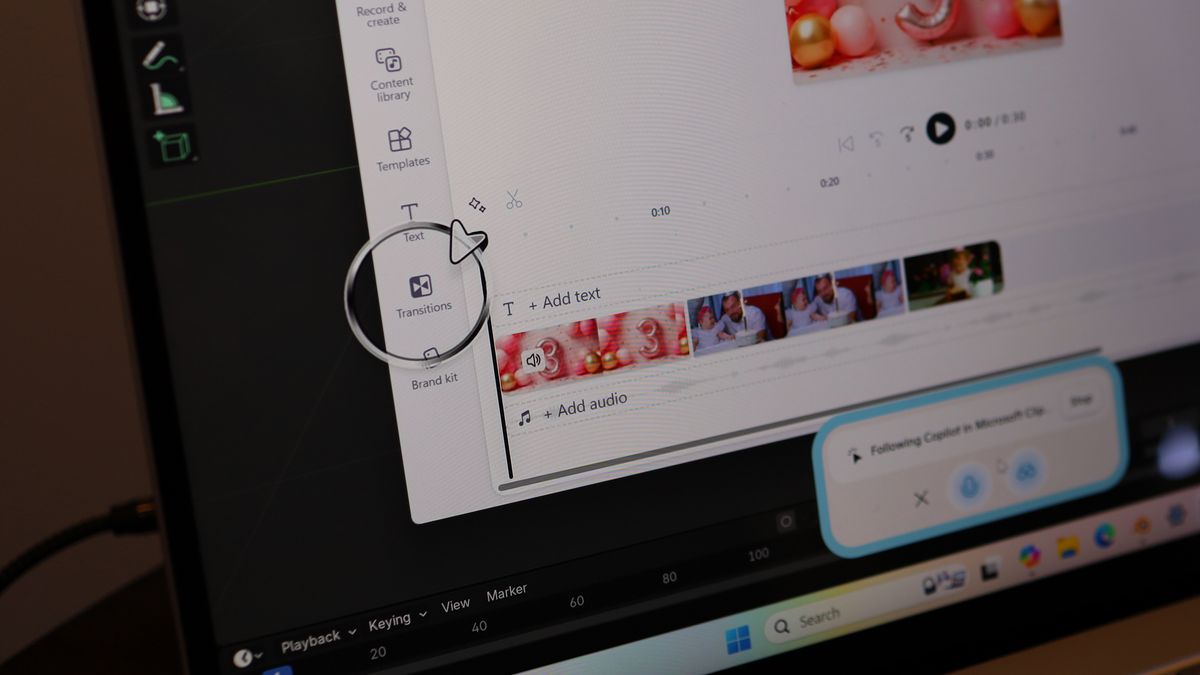

We asked Copilot how to make our video transitions more trouble -free. Instead of a text prompt that explained what to do, Copilot Vision showed us exactly where we can find the necessary tools in the app.

A giant arrow (inside an animated circle) appeared on the screen and pointed to the transition tool as it recommended that we use when it explained the necessary steps. We ran through this demo a few times, and because of its still under -development of nature it didn’t always work.

When it did, however, it pointed to a potentially exciting change in how we work with apps in Windows.

We have also seen a demovideo showing Copilot Vision that digs even deeper into the Photoshop app to find the right tools. This, my friends, is Klipy on steroids.

Imagine the future where you use text printing or your voice to find out how to perform tasks in an open app, and Copilot Vision digitally takes your hand and guides you through. There is no sign that it will take app-level actions on your behalf, but this can be an incredibly visual assistant.

The good news is that the Copilot vision, which at least knows which app and project you are working on is available now. The bad news is that copilot vision I really want has no specific timeline. But I have to assume it’s not long. After all, we saw it live.