- A robot just beat some elite table tennis players

- Sony AI’s Project Ace is good at competing against unpredictable human players

- Success here could mean that it becomes easier to use AI to train future robots to deal with the real world

In competitive table tennis, the ball can travel at speeds of up to 70 mph and it can go anywhere. Of course, there is some predictability based on stroke, spin and how the ball hits the table, but there are also endless possibilities, which now, it seems, a robot has mastered.

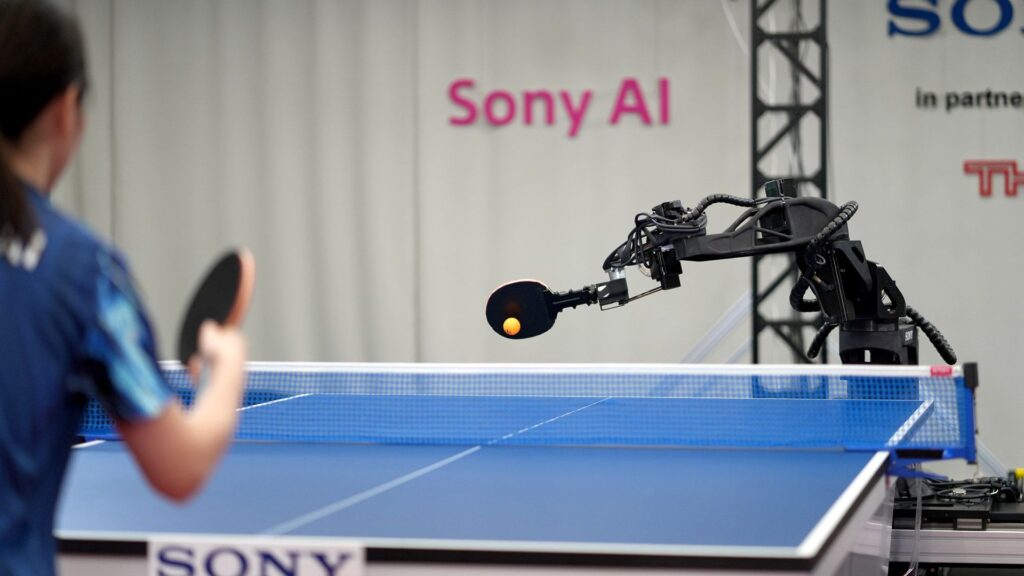

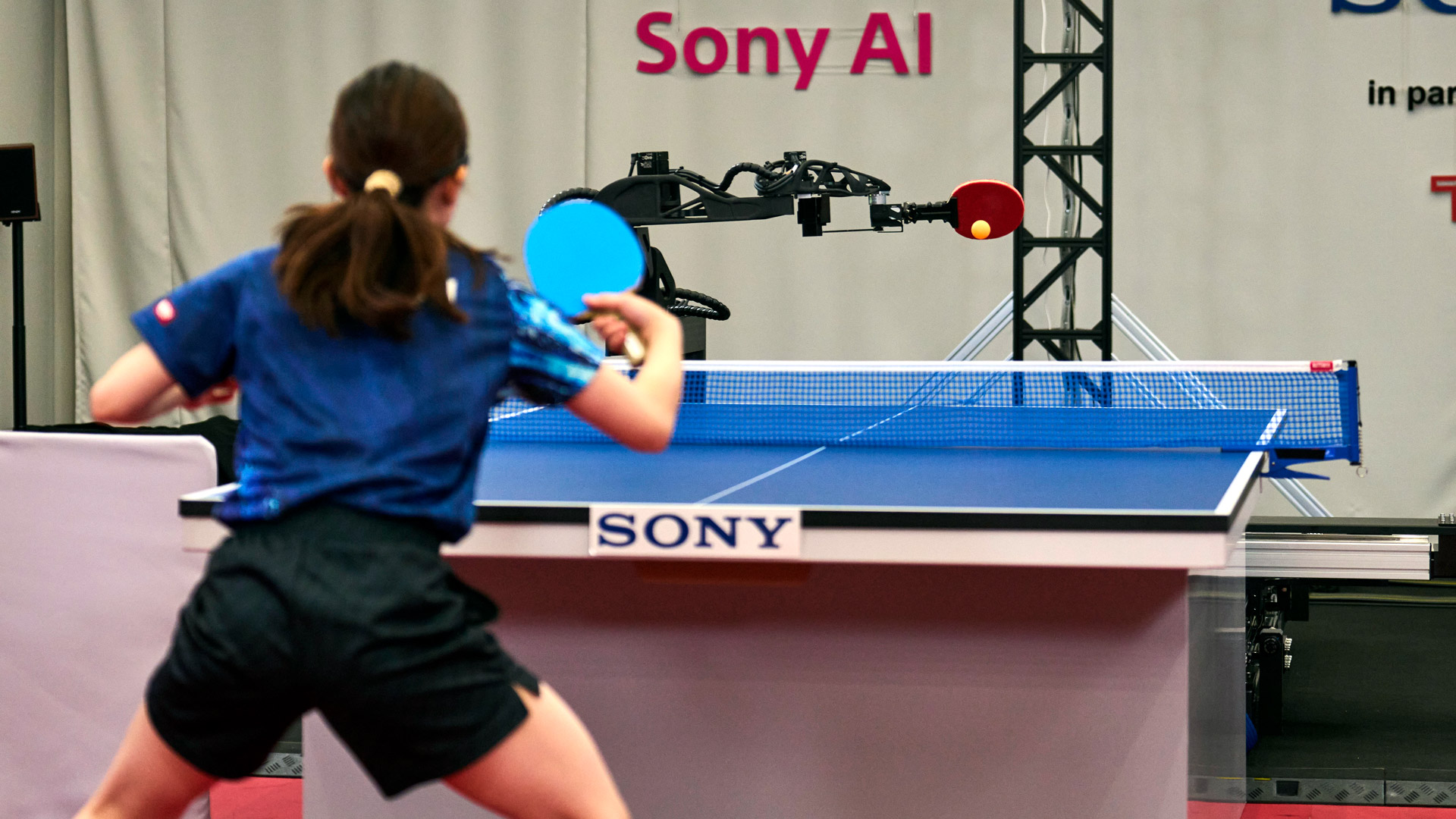

Sony AI’s Project Ace is the first robot to beat multiple elite-level table tennis players in an International Table Tennis Federation-style arena and under the watchful eyes of authorized referees.

In a new Nature article, Play elite table tennis players with an autonomous robotSony AI researcher describes their work and how they built and used AI to train a robot, “Project Ace”, to not just play table tennis, but to do so at a pro level.

The article continues below

“Ace achieved three wins in five matches against elite players, along with competitive performance in the remaining matches. These results demonstrate the potential of physical AI agents to outperform human experts in real-time interactive tasks,” the researchers wrote.

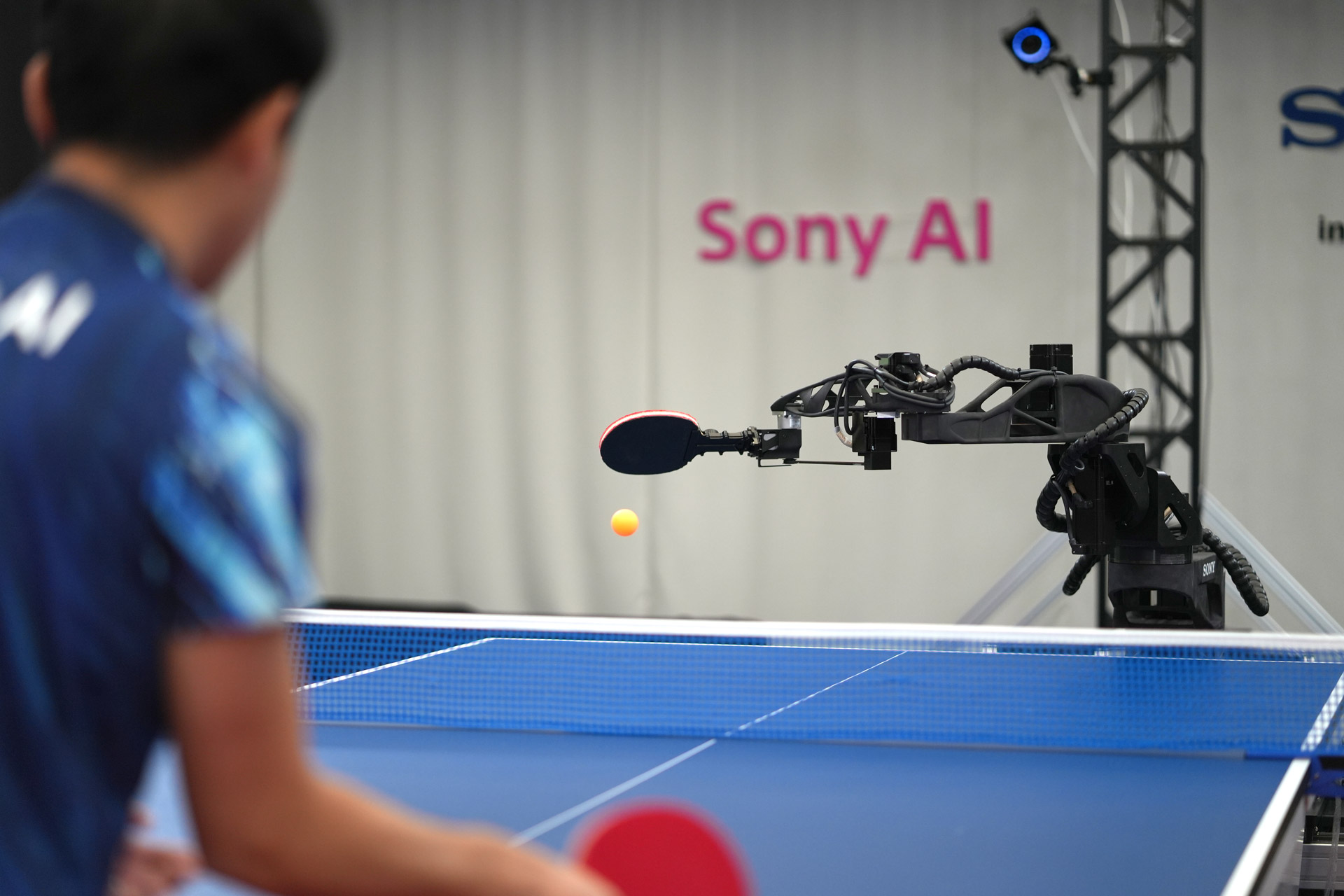

Project Ace is a clever combination of “high-speed perception,” a control system based on reinforcement learning (rewarding good behavior) and “high-speed robotic hardware.”

No feet, but a wicked backhand

Ace doesn’t look like a human ping pong player you’ve ever seen. Instead, it glides in four directions on a custom track system, while its trunk rotates 360 degrees and the fully articulated arm and wrist adjust on the fly to both serve and return the ball. You may have seen ping-pong-playing robots before (I remember seeing a lumbering one at CES 2026), but not like this. The speed alone is amazing.

Yet it is the AI-based reinforcement learning and training simulation that makes Project Ace special and successful. During training, it was able to act out all possible game scenarios. It even practiced against a virtualized version of itself. But it is the “model-free” reinforcement learning that at least partially allows Project Ace to adapt to unpredictable elite, human competitors.

Equipped with built-in sensors and an array of nine cameras positioned around the robot, Project Ace can see things that most human competitors, even elite players, might miss. The spin of the ball, for example, is a determinant of where the ball will go next.

As the researchers explain in the project video, perception is one of the most important innovations, “So it is the only system in the world that can measure the spin of an unchanged table tennis ball at this speed.”

Perhaps the secret sauce here, though, is a technique called “privileged critic” that Sony AI developers used in the training simulations. The privileged critic was given access to perfect match information married to live sensor data. It can be said that it is comparing what should happen with what does. That learning is how the robot prepares for the unexpected.

There is a moment in the video where you can see this at work. The elite human player hits a ball that catches the net and sends it in a different, perhaps unexpected, direction. Project Ace had clearly already planned a return, but it managed to adjust to the ball’s new trajectory and hit a return. It all happens within milliseconds, and one could argue that a human player would have failed to make the same quick adjustment.

“It totally blew me away,” Sony AI Director and Lead Engineer Peter Dürr said in a release about Project Ace.

Like Sony AI’s previous project: teaching the AI how to beat human-level expert players in a Gran Turismo simulation, Project Ace is not about beating professional players and leveling up to Olympic-class table tennis players. It’s about helping robots operate in an unpredictable world.

Most people who see humanoid robots operating in home environments comment on their speed, or lack thereof. The robots move deliberately for safety and to cope with the unexpected. However, Project Ace proves that robots can be trained and train themselves to manage an unpredictable world and at speed.

The table tennis competition of the future is also not completely off the table. After all, the Sony AI team is constantly working to improve Project Ace’s gameplay. They note, for example, that the robot tends to move in and hit earlier than human opponents. Sometimes stepping back and waiting for a blow can yield a more strategic return.

If they fix it (or maybe Project Ace fixes it on their own), why would the Olympics be off the table?

Follow TechRadar on Google News and add us as a preferred source to get our expert news, reviews and opinions in your feeds.